What is Hadoop Ecosystem? – A Beginner's Guide

The Hadoop ecosystem is the finest big data management method in the world. But what is it really?

We are going to discuss exactly that in this blog article. Also, we will see how it changed (more like completely transformed!) the data management.

Hadoop, an open-source software system, stores and processes enormous amounts of data on several machines. Its portions handle data differently.

The whole Hadoop system handles huge data quantities quickly and cheaply. There we can save and process the data.

Hadoop can handle and look into large amounts of data to help companies get the most out of the data they have.

We can use raw data in Hadoop for doing complex studies (and finding new ideas) and making machine learning models (which require vast amounts of data).

Big data management and research are transformed with Hadoop, which you will see here.

What is Big Data, and what are its challenges?

Understanding "big data" and its difficulties is necessary to appreciate the Hadoop ecosystem. Businesses generate and receive massive volumes of organized, semi-structured, and random data daily.

This data comes from many sources that we use everyday (social media, cameras, smart gadgets, etc.).

Big data's scale, speed, and diversity create issues. Traditional data handling approaches struggle to keep up with data volume, pace, and shape. Hadoop handles massive volumes of data fast and flexibly.

Hadoop lets firms store and manage data across several machines. This is "distributed computing." With simultaneous processing, data may be analyzed quicker and better.

5Vs of Big Data:

- Volume: The sheer volume of data being generated is immense and growing exponentially. Traditional data management tools struggle to handle this scale.

- Variety:- Big data comes in various forms, including structured, semi structured, and unstructured data. This diversity makes it hard to do data processing & analysis.

- Velocity: Data is generated and collected at an incredibly rapid pace, making it challenging to process and analyze in real-time.

- Veracity: Big data can be noisy, inaccurate, or incomplete due to its diverse sources and rapid generation.

- Value: Extracting meaningful insights and value from big data requires sophisticated analysis techniques due to its volume and complexity.

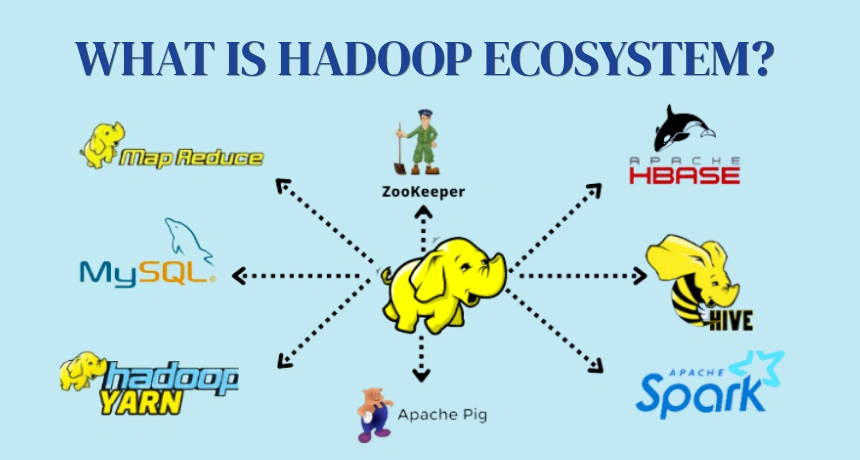

Components of Hadoop Ecosystem

The Hadoop ecosystem is made up of several components. Each plays a very important role in the big data processing pipeline. Here are the components of Hadoop Ecosystem:

Hadoop Distributed File System (HDFS)

HDFS is the backbone of the Hadoop ecosystem. Provides a scalable and fault tolerant storage big data solution. It's built to manage the challenges posed by big data:

- Dividing Data: HDFS divides data into manageable blocks, making storage and processing more efficient.

- Replication: It divides data into blocks and then it replicates them across nodes to ensure fault tolerance & availability.

- Checksums: HDFS uses checksums to verify data integrity, enhancing data reliability.

- Designed for Large Files: Built to manage sizable files and can handle data scaling to petabytes.

- Fault Tolerance: The data is not lost even if the nodes fail sometimes because of the replication.

- Parallel Processing: Supports simultaneous data reading and writing by multiple nodes.

MapReduce and its role in Big Data processing

MapReduce is a really powerful tool for processing large datasets. It operates through a two-step process:

- Map Phase: Data is divided into smaller chunks, and mappers process these chunks independently.

- Reduce Phase: The outputs of the mappers are aggregated and processed by reducers to produce final results.

MapReduce is a programming model and a processing framework in the Hadoop ecosystem. It makes the writing of distributed data processing apps more efficient using "parallel execution".

A large data set is split into smaller "maps," processed on separate nodes. Independent processing of map chunks on different nodes.

The results of maps are combined, or "reduced", for the final output. Parallel processing enables quicker data analysis and processing.

MapReduce is very efficient for many types of data processing. But it might not be suitable for real-time applications due to its batch-oriented nature.

Apache Spark and its integration with Hadoop

Apache Spark has emerged as a faster and more flexible alternative to MapReduce. It supports both, batch processing and real time streaming. Because of this we can use it for much wider range of applications.

- Faster Processing: Spark's in-memory processing capabilities significantly accelerate data processing compared to traditional disk-based systems.

- Real-time Streaming: Spark Streaming enables real-time data processing, allowing applications to respond to data as it arrives.

- Fast, General-Purpose Cluster Computing: Apache Spark is a rapid and versatile cluster computing system in the Hadoop ecosystem.

- Diverse Data Processing: It handles various big data tasks: batches, real-time streaming, machine learning, and graphs.

- Interactive and Expressive: Spark offers an interactive, expressive programming model for complex data analysis.

- Contrast with MapReduce: Compared to MapReduce, Spark provides a more interactive approach and is apt for intricate analysis.

- Seamless Hadoop Integration: Spark integrates well with Hadoop, combining its scalability and Spark's speed for data processing.

Apache Hive and its use for data warehousing

Apache Hive is a data warehouse framework in Hadoop ecosystem. It Offers HiveQL, a SQL-like language for querying and analyzing large Hadoop datasets.

Queries are converted to MapReduce or Spark jobs. This makes data exploration much easier.

Familiar to SQL users, enabling a wide range of users to interact with data. Supports easy addition or modification of data without affecting existing data.

Apache HBase and its role in real-time data processing

Apache HBase is a scalable and consistent NoSQL database. It's constructed atop Hadoop, designed for real time processing of structured and semi structured data.

Apache offers quick data retrieval. This makes it ideal for low latency applications (like Analytics). Provides automatic sharding for scalability and replication for fault tolerance.

Apache Pig and its use for data analysis

- High-Level Data Analysis: Apache Pig is a platform to analyze large datasets in Hadoop.

- Pig Latin Language: It uses Pig Latin, a straightforward scripting language for data analysis.

- Compilation to MapReduce/Spark: Pig Latin scripts compile into efficient MapReduce or Spark jobs.

- Efficiency and Scalability: Enables scalable and efficient data analysis.

- Built-in Functions: Offers numerous built-in functions for data transformation and manipulation.

Additional Tools in Hadoop

YARN (Yet Another Resource Negotiator):

- YARN is a resource management framework used to manage cluster resources effectively.

- It enables multiple applications to share and allocate resources in a Hadoop cluster.

Hadoop Common:

- Hadoop Common is a collection of utilities and libraries shared by other components in the ecosystem.

- It provides foundational tools for Hadoop operations and management.

Apache ZooKeeper Hadoop:

- Apache Zookeeper Manages coordination and synchronization in a Hadoop cluster.

Benefits of the Hadoop Ecosystem

- Scalability: The Hadoop ecosystem scales effortlessly to accommodate growing data volumes.

- Fault Tolerance: Its fault-tolerant design ensures continuous operation, even in the presence of node failures.

- Cost-effectiveness: Hadoop's ability to run on commodity hardware makes it a cost-effective solution.

- Open Source: Being open source, the Hadoop ecosystem is freely available and can be customized as needed.

Future of Hadoop Ecosystem and Big Data processing

Hadoop is very important for handling huge amounts of big data. Hadoop deals with large amounts of data very well, which is good for businesses.

Over 90% of the Fortune 500 companies use Hadoop ecosystem. The Hadoop market is estimated to be worth of $80 Billion around the world.

The Hadoop environment will change to keep up with new technologies and fashions. Apache Spark and other tools for machine learning are used.

NoSQL systems like Apache HBase are becoming more popular as places to store data in real time. Hadoop groups are easy to set up and run with the help of cloud tools.

Hadoop is able to handle data in real time with tools like Apache Kafka. It will not stop changing the handling of big data in the future.

Key Points

I hope you now have got a better understanding of the Hadoop Ecosystem. Here some key points to take away from this blog:

- Adaptable Solution: Hadoop Ecosystem adapts to changing technology and data needs.

- Scalable and Fault-Tolerant: Offers scalability and fault tolerance for big data challenges.

- Cost-Effective and Open-Source: Its open-source nature provides cost-effective solutions.

- Data Value:- Enables organizations to extract insights from data.

- Components' Impact: Components like HDFS, MapReduce, Spark, Hive, HBase, and Pig manage and analyze data.